HMRC knows about your Airbnb listing. It knows about the car you posted on Instagram last month. It cross-referenced your Land Registry records against your declared rental income sometime around Tuesday and flagged a discrepancy that a human investigator will now review over coffee.

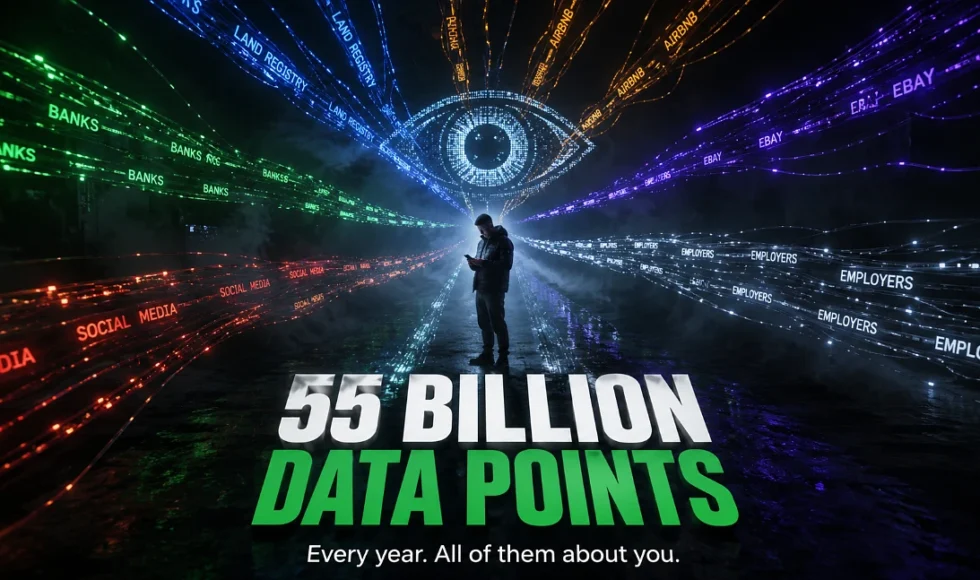

That isn’t speculation. HMRC’s Connect system, the data analytics platform sitting at the core of tax enforcement across Britain, analyses over fifty-five billion data points every year. It pulls from banks, utility companies, the Land Registry, Companies House, overseas tax authorities, online marketplaces like eBay and Etsy, and yes, publicly available social media. The system cost forty-five million pounds to build when it launched in 2010 and in the 2024/25 tax year alone the leads it generated helped recover an additional four point six billion pounds in tax revenue.

That number is about to get bigger. Chancellor Reeves identified a forty-seven billion pound tax gap in the 2025 Budget and roughly seven billion of that is expected to be clawed back through expanded AI surveillance and compliance enforcement. HMRC isn’t hiring thousands of new investigators to do it, they’re feeding Connect more data and letting the algorithms do the targeting.

How Connect Actually Decides You’re Worth Investigating

The system works by cross-matching data sources that most people assume exist in separate silos.

- Your bank reports interest payments to HMRC.

- The Land Registry shows property ownership.

- Estate agents share client lists.

- Airbnb and other platforms report booking income.

- Employers submit payroll data.

- Overseas jurisdictions share account information through automatic exchange agreements.

Connect layers all of this together and builds what amounts to a financial portrait. Then it compares that portrait against what you actually declared on your tax return.

Predictive modelling and risk scoring

The AI component does something genuinely sophisticated here, it doesn’t just look for missing income. It uses predictive modelling to score cases by risk, clustering taxpayers into groups and flagging outliers whose patterns look statistically unusual compared to others in their sector.

- A landlord declaring twelve thousand pounds in rental income when every comparable property in the same postcode generates twenty-five thousand is going to show up.

- A sole trader whose business expenses are three times the industry average is going to show up.

- Someone whose social media suggests a lifestyle that doesn’t match their declared earnings is absolutely going to show up.

Social network analysis

Social network analysis is another layer, Connect maps relationships between people, companies and properties to identify hidden connections. A director running three companies that all transact with the same offshore entity in a jurisdiction known for secrecy, that pattern emerges without any human needing to spot it manually.

False positives and the cost of getting flagged

The system catches genuine evaders but it also flags innocent people. Kevin Igoe, Managing Director of PfP, has publicly noted that Connect can “easily produce false positives and trigger investigations into innocent individuals and businesses.”

When you’re one of those individuals receiving an HMRC enquiry letter because an algorithm decided your numbers looked odd, the experience is stressful and potentially expensive even if you’ve done nothing wrong. Professional representation during a Connect-triggered enquiry typically costs between two thousand and ten thousand pounds depending on complexity.

How prosecution teams are building cases now

It’s not just HMRC. The way financial crime gets investigated across all the major enforcement bodies has shifted fundamentally because of data analytics and the change happened faster than most people in the legal profession expected.

The FCA and data-led regulation

The FCA published its 2025 strategy making clear it was moving to what it called “data-led regulation.” In practical terms that means the FCA is using machine learning to monitor market activity in near real-time, identifying trading anomalies and potential insider dealing or market manipulation before opening formal investigations.

They’re commencing fewer investigations overall but the ones they do open are landing harder because the evidential foundation is already built by the time the target knows they’re being looked at.

The Serious Fraud Office

The Serious Fraud Office takes a different approach to the same underlying principle. In complex multi-jurisdictional cases like Ultra Electronics, the SFO traces financial flows across countries by analysing millions of transaction records and cross-referencing them against communication metadata, compliance documentation and whistleblower reports. The kind of pattern recognition that would have taken a team of forensic accountants months to assemble manually can now be generated in days.

Cross-border intelligence sharing

Europol’s European Financial and Economic Crime Centre, established in 2020, coordinates cross-border financial intelligence sharing across EU member states using centralised analytics platforms. Even post-Brexit, data sharing arrangements mean that transaction patterns identified by European authorities can surface in investigations being run by the NCA or SFO on this side of the channel.

How defence teams use the same tools

Here’s the part that doesn’t get discussed enough. The same analytical techniques that prosecutors use to build cases are available to defence teams, and competent defence work now involves running the prosecution’s dataset independently to find what they missed or got wrong.

Where Connect’s data gets it wrong

The quality of the data used determines how well a pattern match is used by HMRC’s Connect system to flag an individual. When the reality is administrative chaos, incomplete records, misclassified transactions, timing differences between income receipt and declaration, and erroneous data from third parties can all contribute to a narrative of avoidance.

Defence analysts will rebuild the transaction timeline using the source materials and contrast it with what Connect assembled. The distinctions between the two versions serve as the foundation for the defensive argument. The prosecution contends that the pattern reveals hidden income, while the defence contends that the pattern was the result of a timing error between tax years or a data entry error at the bank.

Risk modelling in defence work

Risk modelling has become part of the defence toolkit as well. Compliance officers and defence teams increasingly use methods like Monte Carlo simulation to assess exposure across multiple possible scenarios, running thousands of iterations to estimate the probability distribution of outcomes rather than relying on single-point estimates.

For a company facing a potential investigation the question isn’t just “are we liable” but “across all the variables we can model, what’s the range of financial exposure and which scenarios are most likely.” That probabilistic approach to risk is reshaping how organisations decide whether to self-report, how much to provision for potential penalties and how aggressively to contest HMRC’s interpretation of the data.

Algorithm-driven enforcement and legal safeguards

There’s an uncomfortable question sitting underneath all of this and it hasn’t been answered properly by any government or regulator.

When a system analyses fifty-five billion data points and uses predictive modelling to decide which taxpayers deserve investigation, what happens to the presumption of innocence? You haven’t been accused of anything. No human has reviewed your affairs. An algorithm assigned you a risk score based on statistical patterns and now you’re explaining your finances to an HMRC officer who arrived with a conclusion already half-formed by the data.

The EU AI Act and post-Brexit position

AI systems employed in tax administration and law enforcement are categorised as “high risk” under the EU’s AI Act, which went into effect in August 2024 and calls for frequent audits, human oversight, and transparency requirements. The approach doesn’t immediately apply in Britain after Brexit, but it establishes a benchmark that courts and regulators will increasingly use when decisions about the fairness of algorithm-driven enforcement are contested.

According to HMRC, all final decisions are made by human investigators, and Connect produces leads rather than conclusions. Technically, that is accurate. However, the practical impact on the course of investigations is substantial when the system has already created a financial portrait that appears incriminating before any human is involved. The defence community has begun to challenge this, contending that the same rules that mandate the sharing of prosecution evidence should also apply to the disclosure of algorithm-generated risk rankings to the taxpayer.

As the systems get more robust and the income expectations become more aggressive, that discussion will become more heated. It’s remarkable that 4.6 billion was recovered in a single year. The new goal is seven billion. The investigations will continue to get more focused, and the algorithms will continue to be fed. It is actually unclear if the precautions keep up with the capabilities.